My blog is on a custom domain with GitHub Pages. As it turns out, this configuration can cause some issues, for example, when the site is indexed by a search engine.

A few people have already posted about this, and here an update on the top of the post says: “This is no longer true since at least August 2014. GitHub fixed this!”. This note might refer to the bigger issue about the 5 second delay described in that post, although it made me think maybe GitHub does not do redirects anymore. It still does sometimes:

$ curl -I rovrov.com

HTTP/1.1 302 Found

Connection: close

Pragma: no-cache

cache-control: no-cache

Location: /Note: this does not happen every time, but after a certain timeout the first request oftens gets redirected.

Basically, GitHub Pages server replies with a 302 temporary redirect pointing to the same location as requested. The browser will have to request the same exact url once again and hope not to see a 302 this time. When I asked GH support if there is something can I do about this, I got this not very encouraging response:

you will sometimes receive a 302 Found response in place of a 200 OK response from GitHub Pages sites because of DDoS mitigation software we have in place for all Pages sites

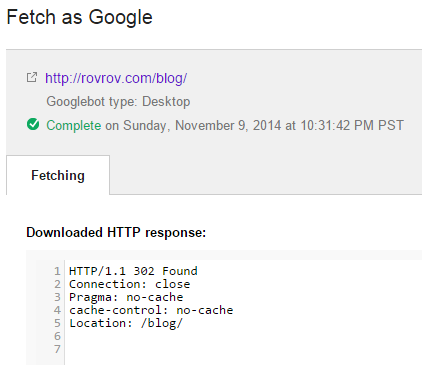

Why is this bad for users? Apart from the delay due to the additional round-trip(s) to the server, this may cause issues with searching engines. I would expect crawlers to be smart enough to handle this kind of redirects. However, as for some reason my blog was not being properly indexed by google, I submitted a link to Fetch as Google. Well, guess what:

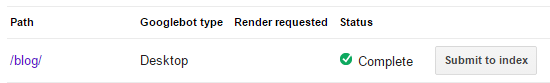

While it obviously did not follow the redirect, it displays as ✔Complete in the list and offers to Submit to Index without any warning or whatsoever:

Right, submitting empty content to the index sounds like the way to go. I had to fetch some of my urls twice and verify that they got a proper response before submitting. I hope crawlers are a bit smarter in this regard, but you never know what they might have on their mind.

According to some posts on this topic, a workaround is to configure a sub-domain such as www. through a CNAME pointing to user.github.io. I guess I will stick with this if it works better, though I am not a huge fun of the www prefix. * Sigh *